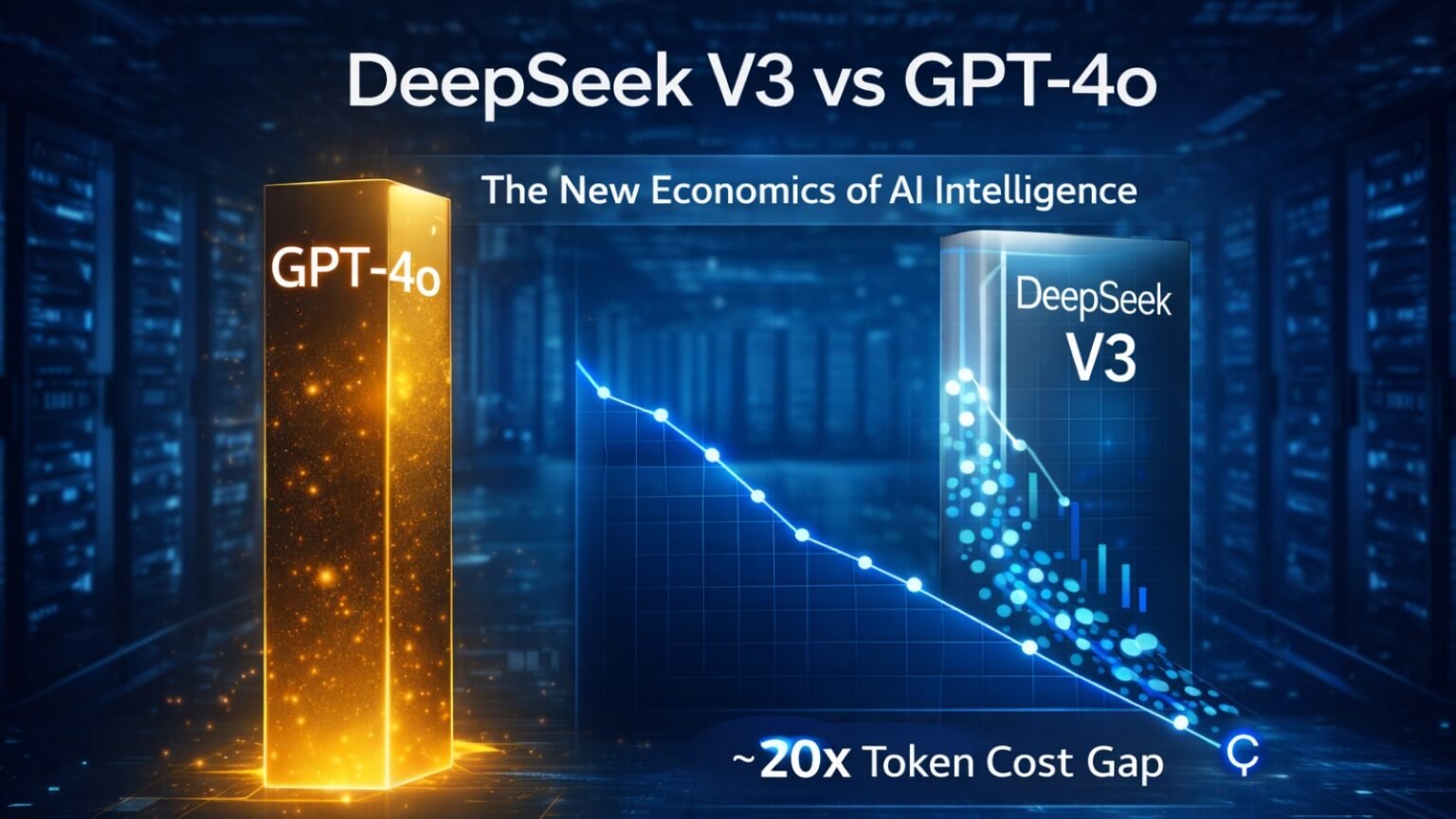

DeepSeek V3 vs GPT-4o is no longer just a model comparison — it represents a structural shift in how enterprises allocate capital to artificial intelligence.

In the ongoing discussion of DeepSeek V3 vs GPT-4o, it becomes evident that both models offer unique advantages.

For the past three years, the AI race focused on intelligence supremacy. In 2026, the battlefield has shifted to cost efficiency, orchestration, and scalable deployment.

DeepSeek V3 vs GPT-4o: Breaking the AI Cost Ceiling

The DeepSeek V3 vs GPT-4o debate highlights the transformative potential of AI technology in business.

The defining signal of 2026 is simple: intelligence has become cheaper — dramatically cheaper.

Public pricing tiers indicate:

| Feature | GPT-4o | DeepSeek V3 | Relative Cost Gap |

|---|---|---|---|

| Input (1M tokens) | ~$2.50 | ~$0.14 | ~17x lower |

| Output (1M tokens) | ~$10.00 | ~$0.28 | ~20–35x lower |

| Architecture | Dense (est.) | Mixture of Experts | Sparse activation |

| Context Window | 128k | 128k | Parity |

| Multimodal | Yes | Text/Code | GPT-4o advantage |

The implication is not simply discount pricing. It is structural. This architecture shift reflects broader systemic pressures discussed in our analysis of The Agentic AI Technical Debt Crisis.

When output tokens cost $10 per million, teams optimize prompts carefully.

When output tokens cost under $0.30, teams allow models to loop, reflect, and retry.

This changes system design entirely.

Architecture Shift: Why DeepSeek V3 Is Cheaper

DeepSeek V3 reportedly uses a Mixture-of-Experts (MoE) architecture. Instead of activating the entire parameter network for every token, it routes queries through specialized “expert” pathways.

This sparse activation reduces GPU utilization per request.

By contrast, GPT-4o likely operates using a more densely activated structure optimized for multimodal integration and safety layers.

The result:

Ultimately, the choice between DeepSeek V3 vs GPT-4o should be guided by specific use cases and organizational needs.

• DeepSeek optimizes compute efficiency

• GPT-4o optimizes integration and user experience

The implications of DeepSeek V3 vs GPT-4o extend beyond cost to impact overall AI strategy.

This is not a quality debate — it is a design philosophy difference.

The Enterprise Math: Agentic Systems vs Chat Interfaces

Traditional chatbot interaction:

~1,000 tokens per query.

Agentic systems:

Planning → reflection → tool usage → validation

Often 40,000–60,000 tokens per task.

The cost implications of DeepSeek V3 vs GPT-4o are reshaping how organizations view AI investments.

Example Scenario:

• 50k input tokens

• 5k output tokens

Approximate cost comparison:

GPT-4o → ~$0.18 per run

DeepSeek V3 → ~$0.008 per run

That difference determines whether agents can run continuously in the background.

This is why CFOs are now influencing model selection.

USA vs UK Pricing & Deployment Strategy

USA Market Strategy

In the US, enterprises typically evaluate:

• MSRP API cost (pre-tax)

• Volume-based discount tiers

• Enterprise support contracts

A common strategy emerging:

Tier 1 (Customer-facing UX): GPT-4o

Tier 2 (Backend processing): DeepSeek V3

Model routers (e.g., gateway orchestration layers) distribute workload dynamically.

UK Market Strategy

In the UK, cost analysis includes:

• VAT impact (20%)

• Data residency concerns

• Cloud hosting costs

Self-hosting or regional deployment can raise DeepSeek’s effective cost to ~$0.40–$0.60 per million tokens, yet it remains significantly below GPT-4o pricing tiers.

For compliance-heavy sectors, hybrid routing is becoming standard.

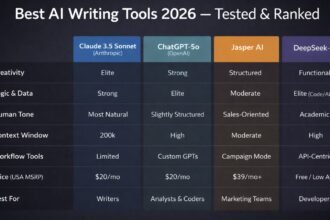

Buying Strategy: Which Model Should You Choose?

Upgrade Matrix

| Use Case | Recommended Model | Why |

|---|---|---|

| Creative Marketing | GPT-4o | Nuanced multimodal output |

| Backend Data Processing | DeepSeek V3 | Cost-efficient bulk reasoning |

| Autonomous Coding Agents | DeepSeek V3 | Loop economics viable |

| Vision/Voice Apps | GPT-4o | Native multimodal capability |

| Internal RAG Systems | DeepSeek V3 | High-volume summarization |

The emergence of DeepSeek V3 vs GPT-4o as a focal point in AI discussions signals a new era.

The Bottom Line

Do not cancel OpenAI outright.

Instead:

As we analyze DeepSeek V3 vs GPT-4o, the focus shifts to sustainable AI practices.

• Set DeepSeek V3 as default for high-volume tasks

• Route premium multimodal work to GPT-4o

• Implement orchestration layer early

The future of AI is being defined through discussions on DeepSeek V3 vs GPT-4o and their respective roles.

Cost diversification is now strategic risk management.

The Capital Signal: Where Value Moves Next

As model costs compress, venture capital shifts upward in the stack.

The discourse surrounding DeepSeek V3 vs GPT-4o underscores the importance of strategic alignment.

Value migrates from:

In conclusion, the DeepSeek V3 vs GPT-4o comparison prompts reevaluation of efficiency in AI solutions.

The shift in AI economics, illustrated by DeepSeek V3 vs GPT-4o, cannot be overlooked.

Foundation Models → Orchestration → Proprietary Data → Workflow Integration.

Generic “wrapper” applications face pressure.

Execution systems with embedded workflows will dominate.

Understanding the dynamics of DeepSeek V3 vs GPT-4o is crucial for future-proofing business strategies.

Conclusion

DeepSeek V3 vs GPT-4o is not a war of intelligence — it is a redefinition of AI economics.

Cheaper intelligence enables more loops.

More loops enable autonomy.

Autonomy reshapes labor models.

Why This Matters

When intelligence becomes inexpensive, architecture becomes the moat.

The long-term advantage will not belong to the smartest model — but to the system that deploys intelligence most efficiently and compliantly.